As native Non-volatile Memory Express (NVMe®) share-storage arrays continue enhancing our ability to store and access more information faster across a much bigger network, customers of all sizes – enterprise, mid-market and SMBs – confront a common question: what is required to take advantage of this quantum leap forward in speed and capacity?

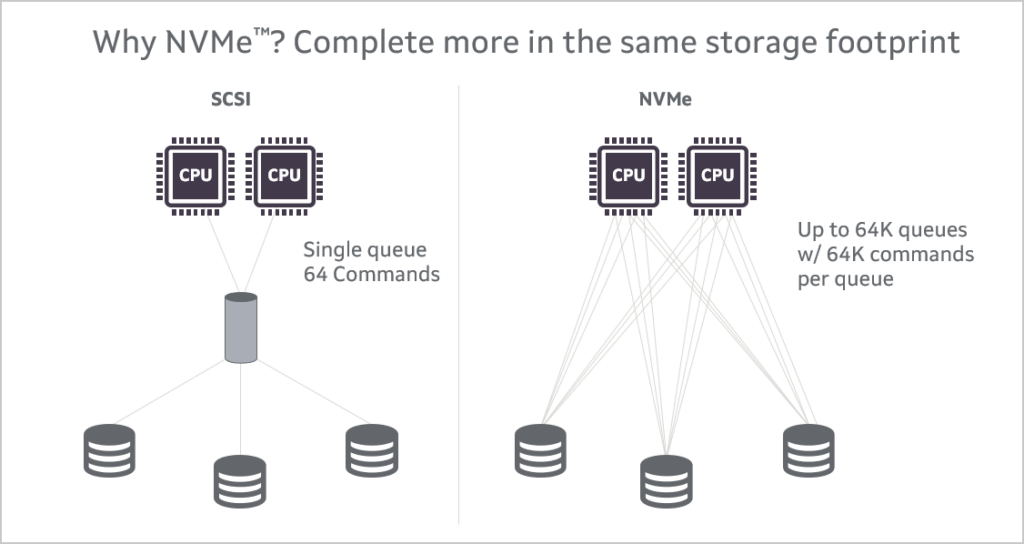

Of course, NVMe technology itself is not new, and is commonly found in laptops, servers and enterprise storage arrays. NVMe provides an efficient command set that is specific to memory-based storage, provides increased performance that is designed to run over PCIe 3.0 or PCIe 4.0 bus architectures, and -- offering 64,000 command queues with 64,000 commands per queue -- can provide much more scalability than other storage protocols.

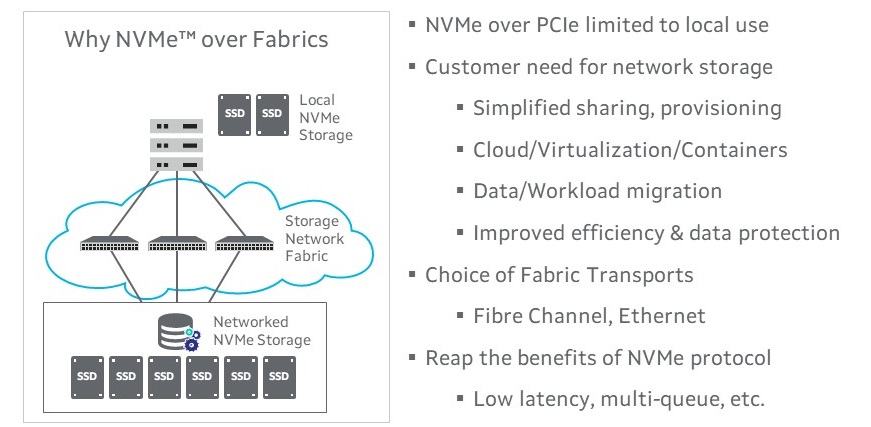

Unfortunately, most of the NVMe in use today is held captive in the system in which it is installed. While there are a few storage vendors offering NVMe arrays on the market today, the vast majority of enterprise datacenter and mid-market customers are still using traditional storage area networks, running SCSI protocol over either Fibre Channel or Ethernet Storage Area Networks (SAN).

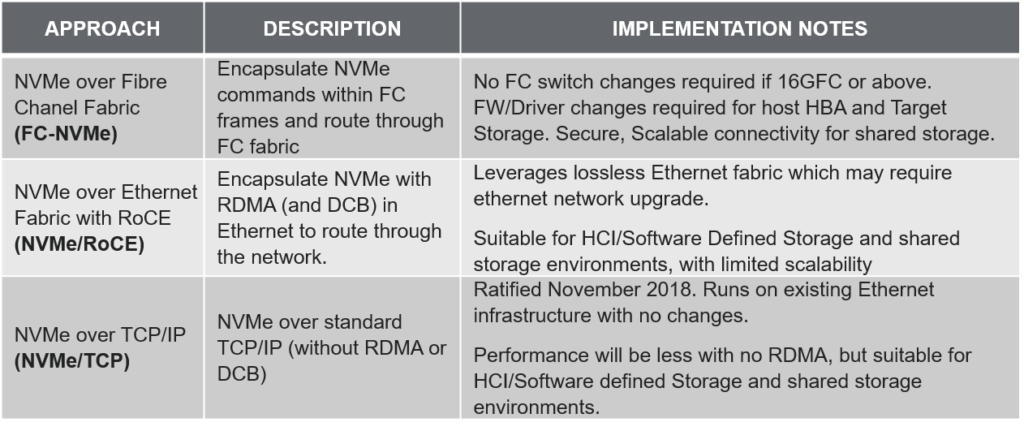

The newest storage networks, however, will be enabled by what we call NVMe over Fabric (NVMe-oF) networks. As with SCSI today, NVMe-oF will offer users a choice of transport protocols. Today, there are three standard protocols that will likely make significant headway into the marketplace. These include:

If NVMe over Fabrics are to achieve their true potential, however, there are three major elements that need to align. First, users will need an NVMe-capable storage network infrastructure in place. Second, all of the major operating system (O/S) vendors will need to provide support for NVMe-oF. Third, customers will need disk array systems that feature native NVMe. Let’s look at each of these in order.

NVMe Storage Network Infrastructure

In addition to Marvell, several leading network and SAN connectivity vendors support one or more varieties of NVMe-oF infrastructure today. This storage network infrastructure (also called the storage fabric), is made up of two main components: the host adapter that provides server connectivity to the storage fabric; and the switch infrastructure that provides all the traffic routing, monitoring and congestion management.

For FC-NVMe, today’s enhanced 16Gb Fibre Channel (FC) host bus adapters (HBA) and 32Gb FC HBAs already support FC-NVMe. This includes the Marvell® QLogic® 2690 series Enhanced 16GFC, 2740 series 32GFC and 2770 Series Enhanced 32GFC HBAs.

On the Fibre Channel switch side, no significant changes are needed to transition from SCSI-based connectivity to NVMe technology, as the FC switch is agnostic about the payload data. The job of the FC switch is to just route FC frames from point to point and deliver them in order, with the lowest latency required. That means any 16GFC or greater FC switch is fully FC-NVMe compatible.

A key decision regarding FC-NVMe infrastructure, however, is whether or not to support both legacy SCSI and next-generation NVMe protocols simultaneously. When customers eventually deploy new NVMe-based storage arrays (and many will over the next three years), they are not going to simply discard their existing SCSI-based systems. In most cases, customers will want individual ports on individual server HBAs that can communicate using both SCSI and NVMe, concurrently. Fortunately, Marvell’s QLogic 16GFC/32GFC portfolio does support concurrent SCSI and NVMe, all with the same firmware and a single driver. This use of a single driver greatly reduces complexity compared to alternative solutions, which typically require two (one for FC running SCSI and another for FC-NVMe).

If we look at Ethernet, which is the other popular transport protocol for storage networks, there is one option for NVMe-oF connectivity today and a second option on the horizon. Currently, customers can already deploy NVMe/RoCE infrastructure to support NVMe connectivity to shared storage. This requires RoCE RDMA-enabled Ethernet adapters in the host, and Ethernet switching that is configured to support a lossless Ethernet environment. There are a variety of 10/25/50/100GbE network adapters on the market today that support RoCE RDMA, including the Marvell FastLinQ® 41000 Series and the 45000 Series adapters.

On the switching side, most 10/25/100GbE switches that have shipped in the past 2-3 years support data center bridging (DCB) and priority flow control (PFC), and can support the lossless Ethernet environment needed to support a low-latency, high-performance NVMe/RoCE fabric.

While customers may have to reconfigure their networks to enable these features and set up the lossless fabric, these features will likely be supported in any newer Ethernet switch or director. One point of caution: with lossless Ethernet networks, scalability is typically limited to only 1 or 2 hops. For high scalability environments, consider alternative approaches to the NVMe storage fabric.

One such alternative is NVMe/TCP. This is a relatively new protocol (NVM Express Group ratification in late 2018), and as such is not widely available today. However, the advantage of NVMe/TCP is that it runs on today’s TCP stack, leveraging TCP’s congestion control mechanisms. That means there’s no need for a tuned environment (like that required with NVMe/RoCE), and NVMe/TCP can scale right along with your network. Think of NVMe/TCP in the same way as you do iSCSI today. Like iSCSI, NVMe/TCP will provide good performance, work with existing infrastructure, and be highly scalable. For those customers seeking the best mix of performance and ease of implementation, NVMe/TCP will be the best bet.

Because there is limited operating system (O/S) support for NVMe/TCP (more on this below), I/O vendors are not currently shipping firmware and drivers that support NVMe/TCP. But a few, like Marvell, have adapters that, from a hardware standpoint, are NVMe/TCP-ready; all that will be required is a firmware update in the future to enable the functionality. Notably, Marvell will support NVMe over TCP with full hardware offload on its FastLinQ adapters in the future. This will enable our NVMe/TCP adapters to deliver high performance and low latency that rivals NVMe/RoCE implementations.

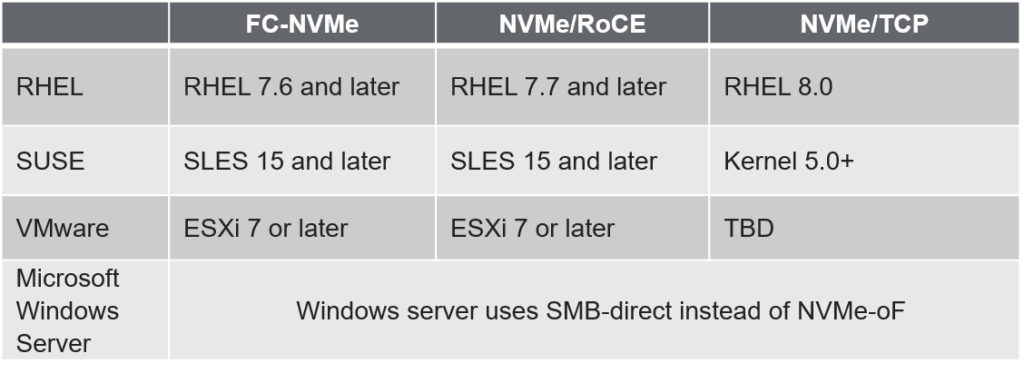

While it’s great that there is already infrastructure to support NVMe-oF implementations, that’s only the first part of the equation. Next comes O/S support. When it comes to support for NVMe-oF, the major O/S vendors are all in different places – see the table below for a current (August 2020) summary. The major Linux distributions from RHEL and SUSE support both FC-NVMe and NVMe/RoCE and have limited support for NVMe/TCP. VMware, beginning with ESXi 7.0, supports both FC-NVMe and NVMe/RoCE but does not yet support NVMe/TCP. Microsoft Windows Server currently uses an SMB-direct network protocol and offers no support for any NVMe-oF technology today.

With VMware ESXi 7.0, be aware of a couple of caveats: VMware does not currently support FC-NVMe or NVMe/RoCE in vSAN or with vVols implementations. However, support for these configurations, along with support for NVMe/TCP, is expected in future releases.

A few storage array vendors have released mid-range and enterprise class storage arrays that are NVMe-native. NetApp sells arrays that support both NVMe/RoCE and FC-NVMe, and are available today. Pure Storage offers NVMe arrays that support NVMe/RoCE, with plans to support FC-NVMe and NVMe/TCP in the future. In late 2019, Dell EMC introduced its PowerMax line of flash storage that supports FC-NVMe. This year and next, other storage vendors will be bringing arrays to market that will support both NVMe/RoCE and FC-NMVe. We expect storage arrays that support NVMe/TCP will become available in the same time frame.

Future-proof your investments by anticipating NVMe-oF tomorrow

Altogether, we are not too far away from having all the elements in place to make NVMe-oF a reality in the data center. If you expect the servers you are deploying today to operate for the next five years, there is no doubt they will need to connect to NVMe-native storage during that time. So plan ahead.

The key from an I/O and infrastructure perspective is to make sure you are laying the groundwork today to be able to implement NVMe-oF tomorrow. Whether that’s Fibre Channel or Ethernet, customers should be deploying I/O technology that supports NVMe-oF today. Specifically, that means deploying 16GFC enhanced or 32GFC HBAs and switching infrastructure for Fibre Channel SAN connectivity. This includes the Marvell QLogic 2690, 2740 or 2770-series Fibre Channel HBAs. For Ethernet, this includes Marvell’s FastLinQ 41000/45000 series Ethernet adapter technology.

These advances represent a big leap forward and will deliver great benefits to customers. The sooner we build industry consensus around the leading protocols, the faster these benefits can be realized.

For more information on Marvell Fibre Channel and Ethernet technology, go to www.marvell.com. For technology specific to our OEM customer servers and storage, go to www.marvell.com/hpe or www.marvell.com/dell.

Tags: FastLinQ, FC-NVMe, Marvell, NVMe-oF, NVMe RoCE, NVMe TCP, QLogic