Archive for the 'Networking' Category

-

June 27, 2023

Scaling AI Infrastructure with High-Speed Optical Connectivity

By Suhas Nayak, Senior Director of Solutions Marketing, Marvell

In the world of artificial intelligence (AI), where compute performance often steals the spotlight, there's an unsung hero working tirelessly behind the scenes. It's something that connects the dots and propels AI platforms to new frontiers. Welcome to the realm of optical connectivity, where data transfer becomes lightning-fast and AI's true potential is unleashed. But wait, before you dismiss the idea of optical connectivity as just another technical detail, let's pause and reflect. Think about it: every breakthrough in AI, every mind-bending innovation, is built on the shoulders of data—massive amounts of it. And to keep up with the insatiable appetite of AI workloads, we need more than just raw compute power. We need a seamless, high-speed highway that allows data to flow freely, powering AI platforms to conquer new challenges.

In this post, I’ll explain the importance of optical connectivity, particularly the role of DSP-based optical connectivity, in driving scalable AI platforms in the cloud. So, buckle up, get ready to embark on a journey where we unlock the true power of AI together.

-

June 13, 2023

FC-NVMe Goes Mainstream for Next-Generation Block Storage from HPE

By Todd Owens, Field Marketing Director, Marvell

While Fibre Channel (FC) has been around for a couple of decades now, the Fibre Channel industry continues to develop the technology in ways that keep it in the forefront of the data center for shared storage connectivity. Always a reliable technology, continued innovations in performance, security and manageability have made Fibre Channel I/O the go-to connectivity option for business-critical applications that leverage the most advanced shared storage arrays.

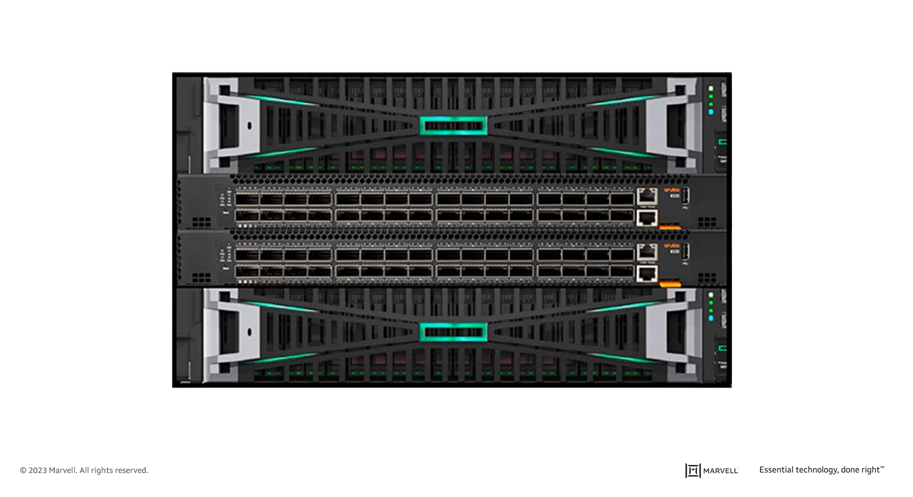

A recent development that highlights the progress and significance of Fibre Channel is Hewlett Packard Enterprise’s (HPE) recent announcement of their latest offering in their Storage as a Service (SaaS) lineup with 32Gb Fibre Channel connectivity. HPE GreenLake for Block Storage MP powered by HPE Alletra Storage MP hardware features a next-generation platform connected to the storage area network (SAN) using either traditional SCSI-based FC or NVMe over FC connectivity. This innovative solution not only provides customers with highly scalable capabilities but also delivers cloud-like management, allowing HPE customers to consume block storage any way they desire – own and manage, outsource management, or consume on demand. HPE GreenLake for Block Storage powered by Alletra Storage MP

HPE GreenLake for Block Storage powered by Alletra Storage MPAt launch, HPE is providing FC connectivity for this storage system to the host servers and supporting both FC-SCSI and native FC-NVMe. HPE plans to provide additional connectivity options in the future, but the fact they prioritized FC connectivity speaks volumes of the customer demand for mature, reliable, and low latency FC technology.

-

February 21, 2023

Marvell and Aviz Networks Collaborate to Drive SONiC Deployment in Cloud and Enterprise Data Centers

By Kant Deshpande, Director, Product Management, Marvell

Disaggregation is the future

Disaggregation—the decoupling of hardware and software—is arguably the future of networking. Disaggregation lets customers select best-of-breed hardware and software, enabling rapid innovation by separating the hardware and software development paths.Disaggregation started with server virtualization and is being adapted to storage and networking technology. In networking, disaggregation promises that any networking operating system (NOS) can be integrated with any switch silicon. Open source-standards like ONIE allow a networking switch to load and install any NOS during the boot process.

SONiC: the Linux of networking OS

Software for Open Networking in Cloud (SONiC) has been gaining momentum as the preferred open-source cloud-scale network operating system (NOS).In fact, Gartner predicts that by 2025, 40% of organizations that operate large data center networks (greater than 200 switches) will run SONiC in a production environment.[i] According to Gartner, due to readily expanding customer interest and a commercial ecosystem, there is a strong possibility SONiC will become analogous to Linux for networking operating systems in next three to six years.

-

February 14, 2023

The Three Things Next-Generation Data Centers Need from Networking

By Amit Sanyal, Senior Director, Product Marketing, Marvell

Data centers are arguably the most important buildings in the world. Virtually everything we do—from ordinary business transactions to keeping in touch with relatives and friends—is accomplished, or at least assisted, by racks of equipment in large, low-slung facilities.

And whether they know it or not, your family and friends are causing data center operators to spend more money. But it’s for a good cause: it allows your family and friends (and you) to continue their voracious consumption, purchasing and sharing of every kind of content—via the cloud.

Of course, it’s not only the personal habits of your family and friends that are causing operators to spend. The enterprise is equally responsible. They’re collecting data like never before, storing it in data lakes and applying analytics and machine learning tools—both to improve user experience, via recommendations, for example, and to process and analyze that data for economic gain. This is on top of the relentless, expanding adoption of cloud services.

-

January 04, 2023

Software-Defined Networking for the Software-Defined Vehicle

By Amir Bar-Niv, VP of Marketing, Automotive Business Unit, Marvell and John Heinlein, Chief Marketing Officer, Sonatus and Simon Edelhaus, VP SW, Automotive Business Unit, Marvell

The software-defined vehicle (SDV) is one of the newest and most interesting megatrends in the automotive industry. As we discussed in a previous blog, the reason that this new architectural—and business—model will be successful is the advantages it offers to all stakeholders:

- The OEMs (car manufacturers) will gain new revenue streams from aftermarket services and new applications;

- The car owners will easily upgrade their vehicle features and functions; and

- The mobile operators will profit from increased vehicle data consumption driven by new applications.

What is a software-defined vehicle? While there is no official definition, the term reflects the change in the way software is being used in vehicle design to enable flexibility and extensibility. To better understand the software-defined vehicle, it helps to first examine the current approach.

Today’s embedded control units (ECUs) that manage car functions do include software, however, the software in each ECU is often incompatible with and isolated from other modules. When updates are required, the vehicle owner must visit the dealer service center, which inconveniences the owner and is costly for the manufacturer.

-

December 05, 2022

Leading Lights Award Recognizes Deneb CDSP Leadership

By Johnny Truong, Senior Manager, Public Relations, Marvell

At this weeks’ Leading Lights Awards Ceremony, hosted by Light Reading, Editor-in-Chief Phil Harvey announced that the Marvell® Deneb™ Coherent Digital Signal Processor (CDSP) is the winner of the Most Innovative Service Provider Transport Solution category. This recognition is awarded to the optical systems vendor or optical components vendor providing the most innovative optical transport solution for service provider customers.

At this weeks’ Leading Lights Awards Ceremony, hosted by Light Reading, Editor-in-Chief Phil Harvey announced that the Marvell® Deneb™ Coherent Digital Signal Processor (CDSP) is the winner of the Most Innovative Service Provider Transport Solution category. This recognition is awarded to the optical systems vendor or optical components vendor providing the most innovative optical transport solution for service provider customers.Driving the industry's largest standards-based ecosystem, the Marvell Deneb CDSP enables disaggregation which is critical for carriers to lower their CAPEX and OPEX as they increase network capacity. This recognition underscores Marvell’s success in bringing leading-edge density and performance optimization advantages to carrier networks.

In its 18th year, the Leading Lights is Light Reading’s flagship awards program which recognizes top companies and executives for their outstanding achievements in next-generation communications technology, applications, services, strategies, and innovations.

Visit the Light Reading blog for a full list of categories, finalists and winners.

-

November 28, 2022

A Marvell-ous Hack Indeed – Winning the Hearts of SONiC Users

By Kishore Atreya, Director of Product Management, Marvell

Recently the Linux Foundation hosted its annual ONE Summit for open networking, edge projects and solutions. For the first time, this year’s event included a “mini-summit” for SONiC, an open source networking operating system targeted for data center applications that’s been widely adopted by cloud customers. A variety of industry members gave presentations, including Marvell’s very own Vijay Vyas Mohan, who presented on the topic of Extensible Platform Serdes Libraries. In addition, the SONiC mini-summit included a hackathon to motivate users and developers to innovate new ways to solve customer problems.

So, what could we hack?

At Marvell, we believe that SONiC has utility not only for the data center, but to enable solutions that span from edge to cloud. Because it’s a data center NOS, SONiC is not optimized for edge use cases. It requires an expensive bill of materials to run, including a powerful CPU, a minimum of 8 to 16GB DDR, and an SSD. In the data center environment, these HW resources contribute less to the BOM cost than do the optics and switch ASIC. However, for edge use cases with 1G to 10G interfaces, the cost of the processor complex, primarily driven by the NOS, can be a much more significant contributor to overall system cost. For edge disaggregation with SONiC to be viable, the hardware cost needs to be comparable to that of a typical OEM-based solution. Today, that’s not possible.

-

November 08, 2022

TSN and Prestera DX1500: A Bridge Across the IT/OT Divide

By Reza Eltejaein, Director, Product Marketing, Marvell

Manufacturers, power utilities and other industrial companies stand to gain the most in digital transformation. Manufacturing and construction industries account for 37 percent of total energy used globally*, for instance, more than any other sector. By fine-tuning operations with AI, some manufacturers can reduce carbon emission by up to 20 percent and save millions of dollars in the process.

Industry, however, remains relatively un-digitized and gaps often exist between operational technology – the robots, furnaces and other equipment on factory floors—and the servers and storage systems that make up a company’s IT footprint. Without that linkage, organizations can’t take advantage of Industrial Internet of Things (IIoT) technologies, also referred to as Industry 4.0. Of the 232.6 million pieces of fixed industrial equipment installed in 2020, only 10 percent were IIoT-enabled.

Why the gap? IT often hasn’t been good enough. Plants operate on exacting specifications. Engineers and plant managers need a “live” picture of operations with continual updates on temperature, pressure, power consumption and other variables from hundreds, if not thousands, of devices. Dropped, corrupted or mis-transmitted data can lead to unanticipated downtime—a $50 billion year problem—as well as injuries, blackouts, and even explosions.

To date, getting around these problems has required industrial applications to build around proprietary standards and/or complex component sets. These systems work—and work well—but they are largely cut off from the digital transformation unfolding outside the factory walls.

The new Prestera® DX1500 switch family is aimed squarely at bridging this divide, with Marvell extending its modern borderless enterprise offering into industrial applications. Based on the IEEE 802.1AS-2020 standard for Time-Sensitive Networking (TSN), Prestera DX1500 combines the performance requirements of industry with the economies of scale and pace of innovation of standards-based Ethernet technology. Additionally, we integrated the CPU and the switch—and in some models the PHY—into a single chip to dramatically reduce power, board space and design complexity.

Done right, TSN will lower the CapEx and OpEx for industrial technology, open the door to integrating Industry 4.0 practices and simplify the process of bringing new equipment to market. -

August 31, 2020

Arm processors in the Data Center

By Raghib Hussain, President, Products and Technologies

Last week, Marvell announced a change in our strategy for ThunderX, our Arm-based server-class processor product line. I’d like to take the opportunity to put some more context around that announcement, and our future plans in the data center market.

ThunderX is a product line that we started at Cavium, prior to our merger with Marvell in 2018. At Cavium, we had built many generations of successful processors for infrastructure applications, including our Nitrox security processor and OCTEON infrastructure processor. These processors have been deployed in the world’s most demanding data-plane applications such as firewalls, routers, SSL-acceleration, cellular base stations, and Smart NICs. Today, OCTEON is the most scalable and widely deployed multicore processor in the market. -

August 27, 2020

How to Reap the Benefits of NVMe over Fabric in 2020

By Todd Owens, Field Marketing Director, Marvell

As native Non-volatile Memory Express (NVMe®) share-storage arrays continue enhancing our ability to store and access more information faster across a much bigger network, customers of all sizes – enterprise, mid-market and SMBs – confront a common question: what is required to take advantage of this quantum leap forward in speed and capacity?

Of course, NVMe technology itself is not new, and is commonly found in laptops, servers and enterprise storage arrays. NVMe provides an efficient command set that is specific to memory-based storage, provides increased performance that is designed to run over PCIe 3.0 or PCIe 4.0 bus architectures, and -- offering 64,000 command queues with 64,000 commands per queue -- can provide much more scalability than other storage protocols.

-

July 28, 2020

Living on the Network Edge: Security

By Alik Fishman, Director of Product Management, Marvell

In our series Living on the Network Edge, we have looked at the trends driving Intelligence, Performance and Telemetry to the network edge. In this installment, let’s look at the changing role of network security and the ways integrating security capabilities in network access can assist in effectively streamlining policy enforcement, protection, and remediation across the infrastructure.

Cybersecurity threats are now a daily struggle for businesses experiencing a huge increase in hacked and breached data from sources increasingly common in the workplace like mobile and IoT devices. Not only are the number of security breaches going up, they are also increasing in severity and duration, with the average lifecycle from breach to containment lasting nearly a year1 and presenting expensive operational challenges. With the digital transformation and emerging technology landscape (remote access, cloud-native models, proliferation of IoT devices, etc.) dramatically impacting networking architectures and operations, new security risks are introduced. To address this, enterprise infrastructure is on the verge of a remarkable change, elevating network intelligence, performance, visibility and security2.

-

July 23, 2020

Telemetry: Can You See the Edge?

By Suresh Ravindran, Senior Director, Software Engineering

So far in our series Living on the Network Edge, we have looked at trends driving Intelligence and Performance to the network edge. In this blog, let’s look into the need for visibility into the network.

As automation trends evolve, the number of connected devices is seeing explosive growth. IDC estimates that there will be 41.6 billion connected IoT devices generating a whopping 79.4 zettabytes of data in 20251. A significant portion of this traffic will be video flows and sensor traffic which will need to be intelligently processed for applications such as personalized user services, inventory management, intrusion prevention and load balancing across a hybrid cloud model. Networking devices will need to be equipped with the ability to intelligently manage processing resources to efficiently handle huge amounts of data flows.

-

July 16, 2020

The Need for Speed at the Edge

By George Hervey, Principal Architect, Marvell

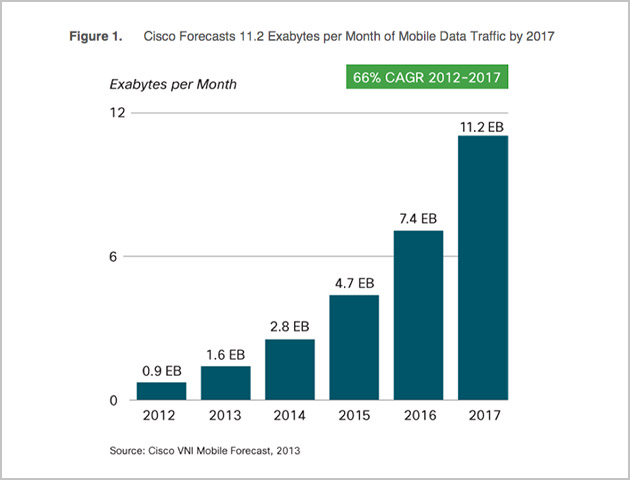

In the previous TIPS to Living on the Edge, we looked at the trend of driving network intelligence to the edge. With the capacity enabled by the latest wireless networks, like 5G, the infrastructure will enable the development of innovative applications. These applications often employ a high-frequency activity model, for example video or sensors, where the activities are often initiated by the devices themselves generating massive amounts of data moving across the network infrastructure. Cisco’s VNI Forecast Highlights predicts that global business mobile data traffic will grow six-fold from 2017 to 2022, or at an annual growth rate of 42 percent1, requiring a performance upgrade of the network.

-

July 08, 2020

Driving Network Intelligence and Processing to the Edge

By George Hervey, Principal Architect, Marvell

The mobile phone has become such an essential part of our lives as we move towards more advanced stages of the “always on, always connected” model. Our phones provide instant access to data and communication mediums, and that access influences the decisions we make and ultimately, our behavior.

According to Cisco, global mobile networks will support more than 12 billion mobile devices and IoT connections by 2022.1 And these mobile devices will support a variety of functions. Already, our phones replace gadgets and enable services. Why carry around a wallet when your phone can provide Apple Pay, Google Pay or make an electronic payment? Who needs to carry car keys when your phone can unlock and start your car or open your garage door? Applications now also include live streaming services that enable VR/AR experiences and sharing in real time. While future services and applications seem unlimited to the imagination, they require next-generation data infrastructure to support and facilitate them. -

April 02, 2018

Understanding Today’s Network Telemetry Requirements

By Tal Mizrahi, Feature Definition Architect, Marvell

There have, in recent years, been fundamental changes to the way in which networks are implemented, as data demands have necessitated a wider breadth of functionality and elevated degrees of operational performance. Accompanying all this is a greater need for accurate measurement of such performance benchmarks in real time, plus in-depth analysis in order to identify and subsequently resolve any underlying issues before they escalate.

The rapidly accelerating speeds and rising levels of complexity that are being exhibited by today’s data networks mean that monitoring activities of this kind are becoming increasingly difficult to execute. Consequently more sophisticated and inherently flexible telemetry mechanisms are now being mandated, particularly for data center and enterprise networks.

A broad spectrum of different options are available when looking to extract telemetry material, whether that be passive monitoring, active measurement, or a hybrid approach. An increasingly common practice is the piggy-backing of telemetry information onto the data packets that are passing through the network. This tactic is being utilized within both in-situ OAM (IOAM) and in-band network telemetry (INT), as well as in an alternate marking performance measurement (AM-PM) context.

At Marvell, our approach is to provide a diverse and versatile toolset through which a wide variety of telemetry approaches can be implemented, rather than being confined to a specific measurement protocol. To learn more about this subject, including longstanding passive and active measurement protocols, and the latest hybrid-based telemetry methodologies, please view the video below and download our white paper.

WHITE PAPER, Network Telemetry Solutions for Data Center and Enterprise Networks

-

February 22, 2018

Marvell to Demonstrate CyberTAN White Box Solution Incorporating the Marvell ARMADA 8040 SoC Running Telco Systems NFVTime Universal CPE OS at Mobile World Congress 2018

By Maen Suleiman, Senior Software Product Line Manager, Marvel

As more workloads are moving to the edge of the network, Marvell continues to advance technology that will enable the communication industry to benefit from the huge potential that network function virtualization (NFV) holds. At this year’s Mobile World Congress (Barcelona, 26th Feb to 1st Mar 2018), Marvell, along with some of its key technology collaborators, will be demonstrating a universal CPE (uCPE) solution that will enable telecom operators, service providers and enterprises to deploy needed virtual network functions (VNFs) to support their customers’ demands.

The ARMADA® 8040 uCPE solution, one of several ARMADA edge computing solutions to be introduced to the market, will be located at the Arm booth (Hall 6, Stand 6E30) and will run Telco Systems NFVTime uCPE operating system (OS) with two deployed off-the-shelf VNFs provided by 6WIND and Trend Micro, respectively, that enable virtual routing and security functionalities. The CyberTAN white box solution is designed to bring significant improvements in both cost effectiveness and system power efficiency compared to traditional offerings while also maintaining the highest degrees of security.

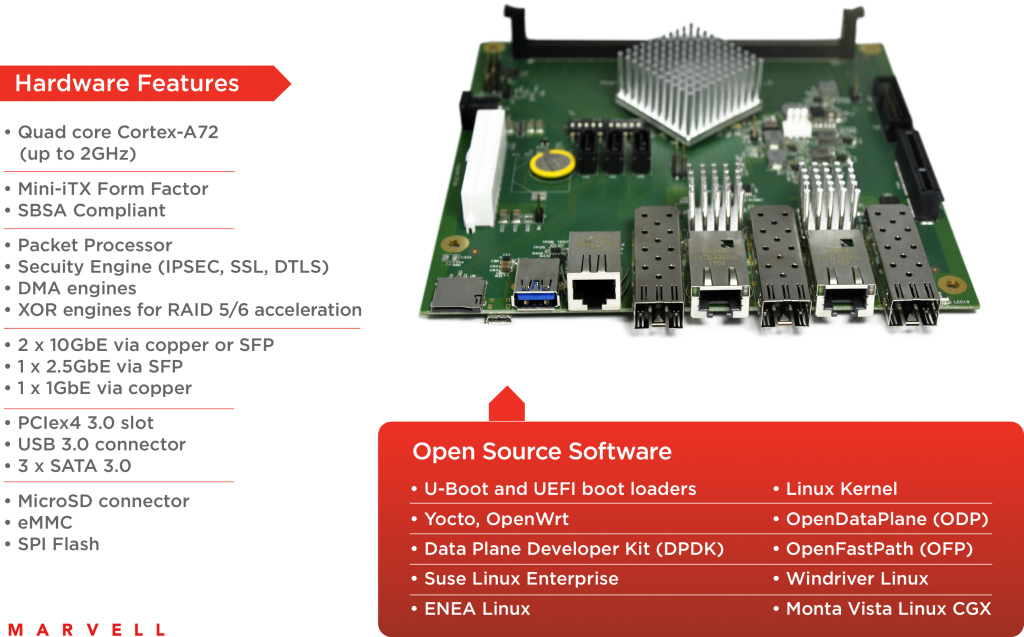

CyberTAN white box solution incorporating Marvell ARMADA 8040 SoC The CyberTAN white box platform is comprised of several key Marvell technologies that bring an integrated solution designed to enable significant hardware cost savings. The platform incorporates the power-efficient Marvell® ARMADA 8040 system-on-chip (SoC) based on the Arm Cortex®-A72 quad-core processor, with up to 2GHz CPU clock speed, and Marvell E6390x Link Street® Ethernet switch on-board. The Marvell Ethernet switch supports 10G uplink and 8 x 1GbE ports along with integrated PHYs, four of which are auto-media GbE ports (combo ports).

The CyberTAN white box benefits from the Marvell ARMADA 8040 processor’s rich feature set and robust software ecosystem, including:

- both commercial and industrial grade offerings

- dual 10G connectivity, 10G Crypto and IPSEC support

- SBSA compliancy

- Arm TrustZone support

- broad software support from the following: UEFI, Linux, DPDK, ODP, OPTEE, Yocto, OpenWrt, CentOS and more

In addition, the uCPE platform supports Mini PCI Express (mPCIe) expansion slots that can enable Marvell advanced 11ac/11ax Wi-Fi or additional wired/wireless connectivity, up to 16GB DDR4 DIMM, 2 x M.2 SATA, one SATA and eMMC options for storage, SD and USB expansion slots for additional storage or other wired/wireless connectivity such as LTE.

At the Arm booth, Telco Systems will demonstrate its NFVTime uCPE operating system on the CyberTAN white box, with zero-touch provisioning (ZTP) feature. NFVTime is an intuitive NFVi-OS that facilitates the entire process of deploying VNFs onto the uCPE, and avoids the complex and frustrating management and orchestration activities normally associated with putting NFV-based services into action. The demonstration will include two main VNFs:

- A 6WIND virtual router VNF based on 6WIND Turbo Router which provides high performance, ready-to-use virtual routing and firewall functionality; and

- A Trend Micro security VNF based on Trend Micro’s Virtual Function Network Suite (VNFS) that offers elastic and high-performance network security functions which provide threat defense and enable more effective and faster protection.

Please contact your Marvell sales representative to arrange a meeting at Mobile World Congress or drop by the Arm booth (Hall 6, Stand 6E30) during the conference to see the uCPE solution in action.

-

October 03, 2017

Celebrating 20 Years of Wi-Fi - Part I

By Prabhu Loganathan, Senior Director of Marketing for Connectivity Business Unit, Marvell

You can't see it, touch it, or hear it - yet Wi-Fi® has had a tremendous impact on the modern world - and will continue to do so. From our home wireless networks, to offices and public spaces, the ubiquity of high speed connectivity without reliance on cables has radically changed the way computing happens. It would not be much of an exaggeration to say that because of ready access to Wi-Fi, we are consequently able to lead better lives - using our laptops, tablets and portable electronics goods in a far more straightforward, simplistic manner with a high degree of mobility, no longer having to worry about a complex tangle of wires tying us down.

Though it may be hard to believe, it is now two decades since the original 802.11 standard was ratified by the IEEE®. This first in a series of blogs will look at the history of Wi-Fi to see how it has overcome numerous technical challenges and evolved into the ultra-fast, highly convenient wireless standard that we know today. We will then go on to discuss what it may look like tomorrow.

Unlicensed Beginnings

While we now think of 802.11 wireless technology as predominantly connecting our personal computing devices and smartphones to the Internet, it was in fact initially invented as a means to connect up humble cash registers. In the late 1980s, NCR Corporation, a maker of retail hardware and point-of-sale (PoS) computer systems, had a big problem. Its customers - department stores and supermarkets - didn't want to dig up their floors each time they changed their store layout.

A recent ruling that had been made by the FCC, which opened up certain frequency bands as free to use, inspired what would be a game-changing idea. By using wireless connections in the unlicensed spectrum (rather than conventional wireline connections), electronic cash registers and PoS systems could be easily moved around a store without the retailer having to perform major renovation work.

Soon after this, NCR allocated the project to an engineering team out of its Netherlands office. They were set the challenge of creating a wireless communication protocol. These engineers succeeded in developing ‘WaveLAN’, which would be recognized as the precursor to Wi-Fi. Rather than preserving this as a purely proprietary protocol, NCR could see that by establishing it as a standard, the company would be able to position itself as a leader in the wireless connectivity market as it emerged. By 1990, the IEEE 802.11 working group had been formed, based on wireless communication in unlicensed spectra.

Using what were at the time innovative spread spectrum techniques to reduce interference and improve signal integrity in noisy environments, the original incarnation of Wi-Fi was finally formally standardized in 1997. It operated with a throughput of just 2 Mbits/s, but it set the foundations of what was to come.

Wireless Ethernet

Though the 802.11 wireless standard was released in 1997, it didn't take off immediately. Slow speeds and expensive hardware hampered its mass market appeal for quite a while - but things were destined to change. 10 Mbit/s Ethernet was the networking standard of the day. The IEEE 802.11 working group knew that if they could equal that, they would have a worthy wireless competitor. In 1999, they succeeded, creating 802.11b. This used the same 2.4 GHz ISM frequency band as the original 802.11 wireless standard, but it raised the throughput supported considerably, reaching 11 Mbits/s. Wireless Ethernet was finally a reality.

Soon after 802.11b was established, the IEEE working group also released 802.11a, an even faster standard. Rather than using the increasingly crowded 2.4 GHz band, it ran on the 5 GHz band and offered speeds up to a lofty 54 Mbits/s.

Because it occupied the 5 GHz frequency band, away from the popular (and thus congested) 2.4 GHz band, it had better performance in noisy environments; however, the higher carrier frequency also meant it had reduced range compared to 2.4 GHz wireless connectivity. Thanks to cheaper equipment and better nominal ranges, 802.11b proved to be the most popular wireless standard by far. But, while it was more cost effective than 802.11a, 802.11b still wasn't at a low enough price bracket for the average consumer. Routers and network adapters would still cost hundreds of dollars.

That all changed following a phone call from Steve Jobs. Apple was launching a new line of computers at that time and wanted to make wireless networking functionality part of it. The terms set were tough - Apple expected to have the cards at a $99 price point, but of course the volumes involved could potentially be huge. Lucent Technologies, which had acquired NCR by this stage, agreed.

While it was a difficult pill to swallow initially, the Apple deal finally put Wi-Fi in the hands of consumers and pushed it into the mainstream. PC makers saw Apple computers beating them to the punch and wanted wireless networking as well. Soon, key PC hardware makers including Dell, Toshiba, HP and IBM were all offering Wi-Fi.

Microsoft also got on the Wi-Fi bandwagon with Windows XP. Working with engineers from Lucent, Microsoft made Wi-Fi connectivity native to the operating system. Users could get wirelessly connected without having to install third party drivers or software. With the release of Windows XP, Wi-Fi was now natively supported on millions of computers worldwide - it had officially made it into the ‘big time’.

This blog post is the first in a series that charts the eventful history of Wi-Fi. The second part, which is coming soon, will bring things up to date and look at current Wi-Fi implementations.

-

July 17, 2017

Rightsizing Ethernet

By George Hervey, Principal Architect, Marvell

Implementation of cloud infrastructure is occurring at a phenomenal rate, outpacing Moore's Law. Annual growth is believed to be 30x and as much 100x in some cases. In order to keep up, cloud data centers are having to scale out massively, with hundreds, or even thousands of servers becoming a common sight.

At this scale, networking becomes a serious challenge. More and more switches are required, thereby increasing capital costs, as well as management complexity. To tackle the rising expense issues, network disaggregation has become an increasingly popular approach. By separating the switch hardware from the software that runs on it, vendor lock-in is reduced or even eliminated. OEM hardware could be used with software developed in-house, or from third party vendors, so that cost savings can be realized.

Though network disaggregation has tackled the immediate problem of hefty capital expenditures, it must be recognized that operating expenditures are still high. The number of managed switches basically stays the same. To reduce operating costs, the issue of network complexity has to also be tackled.

Network Disaggregation

Almost every application we use today, whether at home or in the work environment, connects to the cloud in some way. Our email providers, mobile apps, company websites, virtualized desktops and servers, all run on servers in the cloud.

For these cloud service providers, this incredible growth has been both a blessing and a challenge. As demand increases, Moore's law has struggled to keep up. Scaling data centers today involves scaling out - buying more compute and storage capacity, and subsequently investing in the networking to connect it all. The cost and complexity of managing everything can quickly add up.

Until recently, networking hardware and software had often been tied together. Buying a switch, router or firewall from one vendor would require you to run their software on it as well. Larger cloud service providers saw an opportunity. These players often had no shortage of skilled software engineers. At the massive scales they ran at, they found that buying commodity networking hardware and then running their own software on it would save them a great deal in terms of Capex.

This disaggregation of the software from the hardware may have been financially attractive, however it did nothing to address the complexity of the network infrastructure. There was still a great deal of room to optimize further.

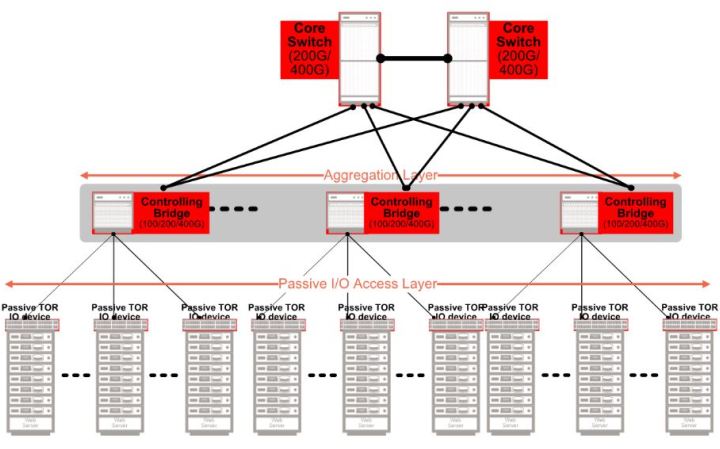

802.1BR

Today's cloud data centers rely on a layered architecture, often in a fat-tree or leaf-spine structural arrangement. Rows of racks, each with top-of-rack (ToR) switches, are then connected to upstream switches on the network spine. The ToR switches are, in fact, performing simple aggregation of network traffic. Using relatively complex, energy consuming switches for this task results in a significant capital expense, as well as management costs and no shortage of headaches.

Through the port extension approach, outlined within the IEEE 802.1BR standard, the aim has been to streamline this architecture. By replacing ToR switches with port extenders, port connectivity is extended directly from the rack to the upstream. Management is consolidated to the fewer number of switches which are located at the upper layer network spine, eliminating the dozens or possibly hundreds of switches at the rack level.

The reduction in switch management complexity of the port extender approach has been widely recognized, and various network switches on the market now comply with the 802.1BR standard. However, not all the benefits of this standard have actually been realized.

The Next Step in Network Disaggregation

Though many of the port extenders on the market today fulfill 802.1BR functionality, they do so using legacy components. Instead of being optimized for 802.1BR itself, they rely on traditional switches. This, as a consequence impacts upon the potential cost and power benefits that the new architecture offers.

Designed from the ground up for 802.1BR, Marvell's Passive Intelligent Port Extender (PIPE) offering is specifically optimized for this architecture. PIPE is interoperable with 802.1BR compliant upstream bridge switches from all the industry’s leading OEMs. It enables fan-less, cost efficient port extenders to be deployed, which thereby provide upfront savings as well as ongoing operational savings for cloud data centers. Power consumption is lowered and switch management complexity is reduced by an order of magnitude

The first wave in network disaggregation was separating switch software from the hardware that it ran on. 802.1BR's port extender architecture is bringing about the second wave, where ports are decoupled from the switches which manage them. The modular approach to networking discussed here will result in lower costs, reduced energy consumption and greatly simplified network management.

-

July 07, 2017

Extending the Lifecycle of 3.2T Switch-Based Architecture

By Yaron Zimmerman, Senior Staff Product Line Manager, Marvell and Yaniv Kopelman, Networking and Connectivity CTO, Marvell

The growth witnessed in the expanse of data centers has been completely unprecedented. This has been driven by the exponential increases in cloud computing and cloud storage demand that is now being witnessed. While Gigabit switches proved more than sufficient just a few years ago, today, even 3.2 Terabit (3.2T) switches, which currently serve as the fundamental building blocks upon which data center infrastructure is constructed, are being pushed to their full capacity.

While network demands have increased, Moore's law (which effectively defines the semiconductor industry) has not been able to keep up. Instead of scaling at the silicon level, data centers have had to scale out. This has come at a cost though, with ever increasing capital, operational expenditure and greater latency all resulting. Facing this challenging environment, a different approach is going to have to be taken. In order to accommodate current expectations economically, while still also having the capacity for future growth, data centers (as we will see) need to move towards a modularized approach.

Scaling out the datacenter

Scaling out the datacenter Data centers are destined to have to contend with demands for substantially heightened network capacity - as a greater number of services, plus more data storage, start migrating to the cloud. This increase in network capacity, in turn, results in demand for more silicon to support it.

To meet increasing networking capacity, data centers are buying ever more powerful Top-of-Rack (ToR) leaf switches. In turn these are consuming more power - which impacts on the overall power budget and means that less power is available for the data center servers. Not only does this lead to power being unnecessarily wasted, in addition it will push the associated thermal management costs and the overall Opex upwards. As these data centers scale out to meet demand, they're often having to add more complex hierarchical structures to their architecture as well - thereby increasing latencies for both north-south and east-west traffic in the process.

The price of silicon per gate is not going down either. We used to enjoy cost reductions as process sizes decreased from 90 nm, to 65 nm, to 40 nm. That is no longer strictly true however. As we see process sizes go down from 28 nm node sizes, yields are decreasing and prices are consequently going up. To address the problems of cloud-scale data centers, traditional methods will not be applicable. Instead, we need to take a modularized approach to networking.

PIPEs and Bridges

Today's data centers often run on a multi-tiered leaf and spine hierarchy. Racks with ToR switches connect to the network spine switches. These, in turn, connect to core switches, which subsequently connect to the Internet. Both the spine and the top of the rack layer elements contain full, managed switches.

By following a modularized approach, it is possible to remove the ToR switches and replace them with simple IO devices - port extenders specifically. This effectively extends the IO ports of the spine switch all the way down to the ToR. What results is a passive ToR that is unmanaged. It simply passes the packets to the spine switch. Furthermore, by taking a whole layer out of the management hierarchy, the network becomes flatter and is thus considerably easier to manage.

The spine switch now acts as the controlling bridge. It is able to manage the layer which was previously taken care of by the ToR switch. This means that, through such an arrangement, it is possible to disaggregate the IO ports of the network that were previously located at the ToR switch, from the logic at the spine switch which manages them. This innovative modularized approach is being facilitated by the increasing number of Port Extenders and Control Bridges now being made available from Marvell that are compatible with the IEEE 802.1BR bridge port extension standard.

Solving Data Center Scaling Challenges

The modularized port-extender and control bridge approach allows data centers to address the full length and breadth of scaling challenges. Port extenders solve the latency by flattening the hierarchy. Instead of having conventional ‘leaf’ and ‘spine’ tiers, the port extender acts to simply extend the IO ports of the spine switch to the ToR. Each server in the rack has a near-direct connection to the managing switch. This improves latency for north-south bound traffic.

The port extender also functions to aggregate traffic from 10 Gbit Ethernet ports into higher throughput outputs, allowing for terabit switches which only have 25, 40, or 100 Gbit Ethernet ports, to communicate directly with 10 Gbit Ethernet edge devices. The passive port extender is a greatly simplified device compared to a managed switch. This means lower up-front costs as well as lower power consumption and a simpler network management scheme are all derived. Rather than dealing with both leaf and spine switches, network administration simply needs to focus on the managed switches at the spine layer.

With no end in sight to the ongoing progression of network capacity, cloud-scale data centers will always have ever-increasing scaling challenges to attend to. The modularized approach described here makes those challenges solvable.

-

June 21, 2017

Making Better Use of Legacy Infrastructure

By Ron Cates

The flexibility offered by wireless networking is revolutionizing the enterprise space. High-speed Wi-Fi®, provided by standards such as IEEE 802.11ac and 802.11ax, makes it possible to deliver next-generation services and applications to users in the office, no matter where they are working. However, the higher wireless speeds involved are putting pressure on the cabling infrastructure that supports the Wi-Fi access points around an office environment. The 1 Gbit/s Ethernet was more than adequate for older wireless standards and applications. Now, with greater reliance on the new generation of Wi-Fi access points and their higher uplink rate speeds, the older infrastructure is starting to show strain. At the same time, in the server room itself, demand for high-speed storage and faster virtualized servers is placing pressure on the performance levels offered by the core Ethernet cabling that connects these systems together and to the wider enterprise infrastructure. One option is to upgrade to a 10 Gbit/s Ethernet infrastructure. But this is a migration that can be prohibitively expensive. The Cat 5e cabling that exists in many office and industrial environments is not designed to cope with such elevated speeds. To make use of 10 Gbit/s equipment, that old cabling needs to come out and be replaced by a new copper infrastructure based on Cat 6a standards. Cat 6a cabling can support 10 Gbit/s Ethernet at the full range of 100 meters, and you would be lucky to run 10 Gbit/s at half that distance over a Cat 5e cable. In contrast to data-center environments that are designed to cope easily with both server and networking infrastructure upgrades, enterprise cabling lying in ducts, in ceilings and below floors is hard to reach and swap out. This is especially true if you want to keep the business running while the switchover takes place. Help is at hand with the emergence of the IEEE 802.3bz™ and NBASE-T® set of standards and the transceiver technology that goes with them. 802.3bz and NBASE-T make it possible to transmit at speeds of 2.5 Gbit/s or 5 Gbit/s across conventional Cat 5e or Cat 6 at distances up to the full 100 meters. The transceiver technology leverages advances in digital signal processing (DSP) to make these higher speeds possible without demanding a change in the cabling infrastructure. The NBASE-T technology, a companion to the IEEE 802.3bz standard, incorporates novel features such as downshift, which responds dynamically to interference from other sources in the cable bundle. The result is lower speed. But the downshift technology has the advantage that it does not cut off communication unexpectedly, providing time to diagnose the problem interferer in the bundle and perhaps reroute it to sit alongside less sensitive cables that may carry lower-speed signals. This is where the new generation of high-density transceivers come in. There are now transceivers coming onto the market that support data rates all the way from legacy 10 Mbit/s Ethernet up to the full 5 Gbit/s of 802.3bz/NBASE-T - and will auto-negotiate the most appropriate data rate with the downstream device. This makes it easy for enterprise users to upgrade the routers and switches that support their core network without demanding upgrades to all the client devices. Further features, such as Virtual Cable Tester® functionality, makes it easier to diagnose faults in the cabling infrastructure without resorting to the use of specialized network instrumentation. Transceivers and PHYs designed for switches can now support eight 802.3bz/NBASE-T ports in one chip, thanks to the integration made possible by leading-edge processes. These transceivers are designed not only to be more cost-effective, they also consume far less power and PCB real estate than PHYs that were designed for 10 Gbit/s networks. This means they present a much more optimized solution with numerous benefits from a financial, thermal and a logistical perspective. The result is a networking standard that meshes well with the needs of modern enterprise networks - and lets that network and the equipment evolve at its own pace. -

June 07, 2017

Community Platform Allows Easy Adoption of ARM 64-bit in Data Center, Networking and Storage Ecosystems

By Maen Suleiman, Senior Software Product Line Manager, Marvell

Marvell MACCHIATObin community board is first-of-its-kind, high-end ARM 64-bit networking and storage community board

The increasing availability of high-speed internet services is connecting people in novel and often surprising ways, and creating a raft of applications for data centers. Cloud computing, Big Data and the Internet of Things (IoT) are all starting to play a major role within the industry.

These opportunities call for innovative solutions to handle the challenges they present, many of which have not been encountered before in IT. The industry is answering that call through technologies and concepts such as software defined networking (SDN), network function virtualization (NFV) and distributed storage. Making the most of these technologies and unleashing the potential of the new applications requires a collaborative approach. The distributed nature and complexity of the solutions calls for input from many different market participants.

A key way to foster such collaboration is through open-source ecosystems. The rise of Linux has demonstrated the effectiveness of such ecosystems and has helped steer the industry towards adopting open-source solutions. (Examples: AT&T Runs Open Source White Box Switch in its Live Network, SnapRoute and Dell EMC to Help Advance Linux Foundation's OpenSwitch Project, Nokia launches AirFrame Data Center for the Open Platform NFV community)

Communities have come together through Linux to provide additional value for the ecosystem. One example is the Linux Foundation Organization which currently sponsors more than 50 open source projects. Its activities cover various parts of the industry from IoT ( IoTivity , EdgeX Foundry ) to full NFV solutions, such as the Open Platform for NFV (OPNFV). This is something that would have been hard to conceive even a couple of years ago without the wide market acceptance of open-source communities and solutions.

Although there are numerous important open-source software projects for data-center applications, the hardware on which to run them and evaluate solutions has been in short supply. There are many ARM® development boards that have been developed and manufactured, but they primarily focus on simple applications.

All these open source software ecosystems require a development platform that can provide a high-performance central processing unit (CPU), high-speed network connectivity and large memory support. But they also need to be accessible and affordable to ARM developers. Marvell MACCHIATObin® is the first ARM 64-bit community platform for open-source software communities that provides solutions for, among others, SDN, NFV and Distributed Storage.

A high-performance ARM 64-bit community platform

A high-performance ARM 64-bit community platform The Marvell MACCHIATObin community board is a mini-ITX form-factor ARM 64-bit network and storage oriented community platform. It is based on the Marvell hyperscale SBSA-compliant ARMADA® 8040 system on chip (SoC) that features four high-performance Cortex®-A72 ARM 64-bit CPUs. ARM Cortex-A72 CPU is the latest and most powerful ARM 64-bit CPU available and supports virtualization, an increasingly important aspect for data center applications.

Together with the quad-core platform, the ARMADA 8040 SoC provides two 10G Ethernet interfaces, three SATA 3.0 interfaces and support for up to 16GB of DDR4 memory to handle highly complex applications. This power does not come at the cost of affordability: the Marvell MACCHIATObin community board is priced at $349. As a result, the Marvell MACCHIATObin community board is the first affordable high-performance ARM 64-bit networking and storage community platform of its kind.

SolidRun (https://www.solid-run.com/) started shipping the Marvell MACCHIATObin community board in March 2017, providing an early access of the hardware to open-source communities.

The Marvell MACCHIATObin community board is easy to deploy. It uses the compact mini-ITX form factor, enabling developers to purchase one of the many cases based on the popular standard mini-ITX case to meet their requirements. The ARMADA 8040 SoC itself is SBSA-compliant (http://infocenter.arm.com/help/topic/com.arm.doc.den0029/) to offer unified extensible firmware interface (UEFI) support.

The ARMADA 8040 SoC includes an advanced network packet processor that supports features such as parsing, classification, QoS mapping, shaping and metering. In addition, the SoC provides two security engines that can perform full IPSEC, DTL and other protocol-offload functions at 10G rates. To handle high-performance RAID 5/6 support, the ARMADA 8040 SoC employs high-speed DMA and XOR engines.

For hardware expansion, the Marvell MACCHIATObin community board provides one PCIex4 3.0 slot and a USB3.0 host connector. For non-volatile storage, options include a built-in eMMC device and a micro-SD card connector. Mass storage is available through three SATA 3.0 connectors. For debug, developers can access the board’s processors through a choice of a virtual UART running over the microUSB connector, 20-pin connector for JTAG access or two UART headers. The Marvell MACCHIATObin community board technical specifications can be found here: MACCHIATObin Specification.

Open source software enables advanced applications

The Marvell MACCHIATObin community board comes with rich open source software that includes ARM Trusted Firmware (ATF), U-Boot, UEFI, Linux Kernel, Yocto, OpenWrt, OpenDataPlane (ODP) , Data Plane Development Kit (DPDK), netmap and others; many of the Marvell MACCHIATObin open source software core components are available at: https://github.com/orgs/MarvellEmbeddedProcessors/.

To provide the Marvell MACCHIATObin community board with ready-made support for the open-source platforms used at the edge and data centers for SDN, NFV and similar applications, standard operating systems like Suse Linux Enterprise, CentOS, Ubuntu and others should boot and run seamlessly on the Marvell MACCHIATObin community board.

As the ARMADA 8040 SoC is SBSA compliant and supports UEFI with ACPI, along with Marvell’s upstreaming of Linux kernel support, standard operating systems can be enabled on the Marvell MACCHIATObin community board without the need of special porting.

On top of this core software, a wide variety of ecosystem applications needed for the data center and edge applications can be assembled.

For example, using the ARMADA 8040 SoC high-speed networking and security engine will enable the kernel netdev community to develop and maintain features such as XDP or other kernel network features on ARM 64-bit platforms. The ARMADA 8040 SoC security engine will enable many other Linux kernel open-source communities to implement new offloads.

Thanks to the virtualization support available on the ARM Cortex A72 processors, virtualization technology projects such as KVM and XEN can be enabled on the platform; container technologies like LXC and Docker can also be enabled to maximize data center flexibility and enable a virtual CPE ecosystem where the Marvell MACCHIATObin community board can be used to develop edge applications on a 64-bit ARM platform.

In addition to the mainline Linux kernel, Marvell is upstreaming U-Boot and UEFI, and is set to upstream and open the Marvell MACCHIATObin ODP and DPDK support. This makes the Marvell MACCHIATObin board an ideal community platform for both communities, and will open the door to related communities who have based their ecosystems on ODP or DPDK. These may be user-space network-stack communities such as OpenFastPath and FD.io or virtual switching technologies that can make use of both the ARMADA 8040 SoC virtualization support and networking capabilities such as Open vSwitch (OVS) or Vector Packet Processing (VPP). Similar to ODP and DPDK, Marvell MACCHIATObin netmap support can enable VALE virtual switching technology or security ecosystem such as pfsense.

Thanks to its hardware features and upstreamed software support, the Marvell MACCHIATObin community board is not limited to data center SDN and NFV applications. It is highly suited as a development platform for network and security products and applications such as network routers, security appliances, IoT gateways, industrial computing, home customer-provided equipment (CPE) platforms and wireless backhaul controllers; a new level of scalable and modular solutions can be further achieved when combining the Marvell MACCHIATObin community board with Marvell switches and PHY products.

Summary

The Marvell MACCHIATObin is the first of its kind: a high-performance, cost-effective networking community platform. The board supports a rich software ecosystem and has made available high-performance, high-speed networking ARM 64-bit community platforms at a price that is affordable for the majority of ARM developers, software vendors and other interested companies. It makes ARM 64-bit far more accessible than ever before for developers of solutions for use in data centers, networking and storage.

-

May 23, 2017

Marvell MACCHIATObin Community Board Now Shipping

By Maen Suleiman, Senior Software Product Line Manager, Marvell

First-of-its-kind community platform makes ARM-64bit accessible for data center, networking and storage solutions developers

As network infrastructure continues to transition to Software-Defined Networking (SDN) and Network Functions Virtualization (NFV), the industry is in great need of cost-optimized hardware platforms coupled with robust software support for the development of a variety of networking, security and storage solutions. The answer is finally here!

Now, with the shipping of the Marvell MACCHIATObin™ community board, developers and companies have access to a high-performance, affordable ARM®-based platform with the required technologies such as an ARMv8 64bit CPU, virtualization, high-speed networking and security accelerators, and the added benefit of open source software. SolidRun started shipping the Marvell MACCHIATObin community board in March 2017, providing an early access of the hardware to open-source communities.

Click image to enlarge

The Marvell MACCHIATObin community board is a mini-ITX form-factor ARMv8 64bit network- and storage-oriented community platform. It is based on the Marvell® hyperscale SBSA-compliant ARMADA® 8040 system on chip (SoC) (http://www.marvell.com/embedded-processors/armada-80xx/) that features quad-core high-performance Cortex®-A72 ARM 64bit CPUs

Together with the quad-core Cortex-A72 ARM64bit CPUs, the Marvell MACCHIATObin community board provides two 10G Ethernet interfaces, three SATA 3.0 interfaces and support for up to 16GB of DDR4 memory to handle higher performance data center applications. This power does not come at the cost of affordability: the Marvell MACCHIATObin community board is priced at $349. As a result, it is the first affordable high-performance ARM 64bit networking and storage community platform of its kind.

The Marvell MACCHIATObin community board is easy to deploy. It uses the compact mini-ITX form factor enabling developers and companies to purchase one of the many cases based on the popular standard mini-ITX case to meet their requirements. The ARMADA 8040 SoC itself is SBSA- compliant to offer unified extensible firmware interface (UEFI) support. You can find the full specification at: http://wiki.macchiatobin.net/tiki-index.php?page=About+MACCHIATObin.

To provide the Marvell MACCHIATObin community board with ready-made support for the open-source platforms used in SDN, NFV and similar applications, Marvell is upstreaming MACCHIATObin software support to the Linux kernel, U-Boot and UEFI, and is set to upstream and open the Marvell MACCHIATObin community board for ODP and DPDK support.

In addition to upstreaming the MACCHIATObin software support, Marvell added MACCHIATObin support to the ARMADA 8040 SDK and plans to make the ARMADA 8040 SDK publicly available. Many of the ARMADA 8040 SDK components are available at: https://github.com/orgs/MarvellEmbeddedProcessors/.

For more information about the many innovative features of the Marvell MACCHIATObin community board, please visit: http://wiki.macchiatobin.net. To place an order for the Marvell MACCHIATObin community board, please go to: http://macchiatobin.net/.

-

April 27, 2017

The Challenges Of 11ac Wave 2 and 11ax in Wi-Fi Deployments: How to Cost-Effectively Upgrade to 2.5GBASE-T and 5GBASE-T

By Nick Ilyadis

The Insatiable Need for Bandwidth: Standards Trying to Keep Up

With the push for more and more Wi-Fi bandwidth, the WLAN industry, its standards committees and the Ethernet switch manufacturers are having a hard time keeping up with the need for more speed. As the industry prepares for upgrading to 802.11ac Wave 2 and the promise of 11ax, the ability of Ethernet over existing copper wiring to meet the increased transfer speeds is being challenged. And what really can’t keep up are the budgets that would be needed to physically rewire the millions of miles of cabling in the world today.

The Latest on the Latest Wireless Networking Standards: IEEE 802.11ac Wave 2 and 802.11ax

The latest 802.11ac IEEE standard is now in Wave 2. According to Webopedia’s definition: the 802.11ac -2013 update, or 802.11ac Wave 2, is an addendum to the original 802.11ac wireless specification that utilizes Multi-User, Multiple-Input, Multiple-Output (MU-MIMO) technology and other advancements to help increase theoretical maximum wireless speeds from 3.47 gigabits-per-second (Gbps), in the original spec, to 6.93 Gbps in 802.11ac Wave 2. The original 802.11ac spec itself served as a performance boost over the 802.11n specification that preceded it, increasing wireless speeds by up to 3x. As with the initial specification, 802.11ac Wave 2 also provides backward compatibility with previous 802.11 specs, including 802.11n.

IEEE has also noted that in the past two decades, the IEEE 802.11 wireless local area networks (WLANs) have also experienced tremendous growth with the proliferation of IEEE 802.11 devices, as a major Internet access for mobile computing. Therefore, the IEEE 802.11ax specification is under development as well. Giving equal time to Wikipedia, its definition of 802.11ax is: a type of WLAN designed to improve overall spectral efficiency in dense deployment scenarios, with a predicted top speed of around 10 Gbps. It works in 2.4GHz or 5GHz and in addition to MIMO and MU-MIMO, it introduces Orthogonal Frequency-Division Multiple Access (OFDMA) technique to improve spectral efficiency and also higher order 1024 Quadrature Amplitude Modulation (QAM) modulation support for better throughputs. Though the nominal data rate is just 37 percent higher compared to 802.11ac, the new amendment will allow a 4X increase of user throughput. This new specification is due to be publicly released in 2019.

Faster “Cats” Cat 5, 5e, 6, 6e and on

And yes, even cabling is moving up to keep up. You’ve got Cat 5, 5e, 6, 6e and 7 (search: Differences between CAT5, CAT5e, CAT6 and CAT6e Cables for specifics), but suffice it to say, each iteration is capable of moving more data faster, starting with the ubiquitous Cat 5 at 100Mbps at 100MHz over 100 meters of cabling to Cat 6e reaching 10,000 Mbps at 500MHz over 100 meters. Cat 7 can operate at 600MHz over 100 meters, with more “Cats” on the way. All of this of course, is to keep up with streaming, communications, mega data or anything else being thrown at the network.

How to Keep Up Cost-Effectively with 2.5BASE-T and 5BASE-T

What this all boils down to is this: no matter how fast the network standards or cables get, the migration to new technologies will always be balanced with the cost of attaining those speeds and technologies in the physical realm. In other words, balancing the physical labor costs associated to upgrade all those millions of miles of cabling in buildings throughout the world, as well as the switches or other access points. The labor costs alone, are a reason why companies often seek out to stay in the wiring closet as long as possible, where the physical layer (PHY) devices, such access and switches, remain easier and more cost effective to switch out, than replacing existing cabling.

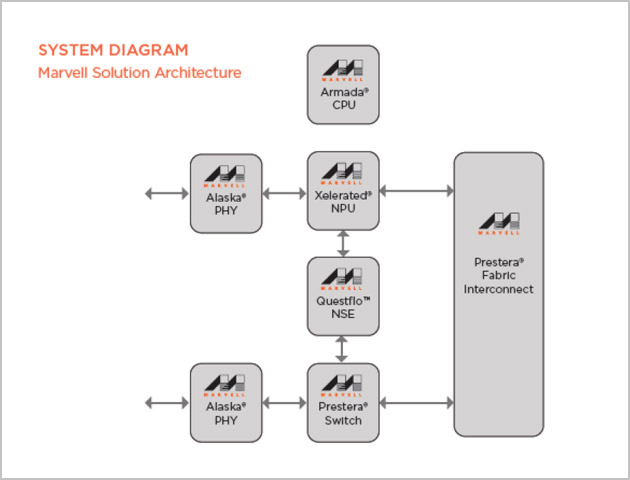

This is where Marvell steps in with a whole solution. Marvell’s products, including the Avastar wireless products, Alaska PHYs and Prestera switches, provide an optimized solution that will help support up to 2.5 and 5.0 Gbps speeds, using existing cabling. For example, the Marvell Avastar 88W8997 wireless processor was the industry's first 28nm, 11ac (wave-2), 2x2 MU-MIMO combo with full support for Bluetooth 4.2, and future BT5.0. To address switching, Marvell created the Marvell® Prestera® DX family of packet processors, which enables secure, high-density and intelligent 10GbE/2.5GbE/1GbE switching solutions at the access/edge and aggregation layers of Campus, Industrial, Small Medium Business (SMB) and Service Provider networks. And finally, the Marvell Alaska family of Ethernet transceivers are PHY devices which feature the industry's lowest power, highest performance and smallest form factor.

These transceivers help optimize form factors, as well as multiple port and cable options, with efficient power consumption and simple plug-and-play functionality to offer the most advanced and complete PHY products to the broadband market to support 2.5G and 5G data rate over Cat5e and Cat6 cables.

You mean, I don’t have to leave the wiring closet?

The longer changes can be made at the wiring closet vs. the electricians and cabling needed to rewire, the better companies can balance faster throughput at lower cost. The Marvell Avastar, Prestera and Alaska product families are ways to help address the upgrade to 2.5G- and 5GBASE-T over existing copper wire to keep up with that insatiable demand for throughput, without taking you out of the wiring closet. See you inside!

# # #

-

April 27, 2017

Top Eight Data Center Trends For Keeping up with High Data Bandwidth Demand

By Nick Ilyadis, VP of Portfolio Technology, Marvell

IoT devices, online video streaming, increased throughput for servers and storage solutions – all have contributed to the massive explosion of data circulating through data centers and the increasing need for greater bandwidth. IT teams have been chartered with finding the solutions to support higher bandwidth to attain faster data speeds, yet must do it in the most cost-efficient way - a formidable task indeed. Marvell recently shared with eWeek about what it sees as the top trends in data centers as they try to keep up with the unprecedented demand for higher and higher bandwidth. Below are the top eight data center trends Marvell has identified as IT teams develop the blueprint for achieving high bandwidth, cost-effective solutions to keep up with explosive data growth.

1.) Higher Adoption of 25GbE

To support this increased need for high bandwidth, companies are evaluating whether to adopt 40GbE to the server as an upgrade from 10GbE. 25GbE provides more cost effective throughput than 40GbE since 40GbE requires more power and costlier cables. Therefore, 25GbE is becoming acknowledged as an optimal next-generation Ethernet speed for connecting servers as data centers seek to balance cost/performance tradeoffs.

2.) The Ability to Bundle and Unbundle Channels

Historically, data centers have upgraded to higher link speeds by aggregating multiple single-lane 10GbE network physical layers. Today, 100Gbps can be achieved by bundling four 25Gbps links together or alternatively, 100GbE can also be unbundled into four independent 25GbE channels. The ability to bundle and unbundle 100GbE gives IT teams wider flexibility in moving data across their network and in adapting to changing customer needs.

3.) Big Data Analytics

Increased data means increased traffic. Real-time analytics allow organizations to monitor and make adjustments as needed to effectively allocate precious network bandwidth and resources. Leveraging analytics has become a key tool for data center operators to maximize their investment.

4.) Growing Demand for Higher-Density Switches

Advances in semiconductor processes to 28nm and 16nm have allowed network switches to become smaller and smaller. In the past, a 48-port switch required two chips with advanced port configurations. But today, the same result can be achieved on a single chip, which not only keeps costs down, but improves power efficiency.

5.) Power Efficiency Needed to Keep Costs Down

Energy costs are often among the highest costs incurred by data centers. Ethernet solutions designed with greater power efficiency help data centers transition to the higher GbE rates needed to keep up with the higher bandwidth demands, while keeping energy costs in check.

6.) More Outsourcing of IT to the Cloud

IT organizations are not only adopting 25GbE to address increasing bandwidth demands, they are also turning to the cloud. By outsourcing IT to the cloud, organizations are able to secure more space on their network, while maintaining bandwidth speeds.

7.) Using NVM Express-based Storage to Maximize Performance

NVM Express® (NVMe™) is a scalable host controller interface designed to address the needs of enterprise, data center and client systems that utilize PCI-e based solid-state drives (SSDs.) By using the NVMe protocol, data centers can exploit the full performance of SSDs, creating new compute models that no longer have the limitations of legacy rotational media. SSD performance can be maximized, while server clusters can be enabled to pool storage and share data access throughout the network.

8.) Transition from Servers to Network Storage With the growing amount of data transferred across networks, more data centers are deploying storage on networks vs. servers. Ethernet technologies are being leveraged to attach storage to the network instead of legacy storage interconnects as the data center transitions from a traditional server model to networked storage.

8.) Transition from Servers to Network Storage With the growing amount of data transferred across networks, more data centers are deploying storage on networks vs. servers. Ethernet technologies are being leveraged to attach storage to the network instead of legacy storage interconnects as the data center transitions from a traditional server model to networked storage.As shown above, IT teams are using a variety of technologies and methods to keep up with the explosive increase in data and higher needs for data center bandwidth. What methods are you employing to keep pace with the ever-increasing demands on the data center, and how do you try to keep energy usage and costs down?

# # #

-

April 03, 2017

How the Introduction of the Cell Phone Sparked Today’s Data Demands

By Sander Arts, Interim VP of Marketing, Marvell

Almost 44 years ago on April 3, 1973, an engineer named Martin Cooper walked down a street in Manhattan with a brick-shaped device in his hand and made history’s very first cell phone call. Weighing an impressive 2.5 pounds and standing 11 inches tall, the world’s first mobile device featured a single-line, text-only LED display screen.

Credit: Wikipedia

A lot has changed since then. Phones have gotten smaller, faster and smarter, innovating at a pace that would have been unimaginable four decades ago. Today, phone calls are just one of the many capabilities that we expect from our mobile devices, in addition to browsing the internet, watching videos, finding directions, engaging in social media and more. All of these activities require the rapid movement and storage of data, drawing closer parallels to the original PC than Cooper’s 2.5 pound prototype. And that’s only the beginning – the demand for data has expanded far past mobile.

Data Demands: to Infinity and Beyond!

Today’s consumers can access content from around the world almost instantaneously using a variety of devices, including smartphones, tablets, cars and even household appliances. Whether it’s a large-scale event such as Super Bowl LI or just another day, data usage is skyrocketing as we communicate with friends, family and strangers across the globe sharing ideas, uploading pictures, watching videos, playing games and much more.

According to a study by Domo, every minute in the U.S. consumers use over 18 million megabytes of wireless data. At the recent 2017 OCP U.S. Summit, Facebook shared that over 95 million photos and videos are posted on Instagram every day – and that’s only one app. As our world becomes smarter and more connected, data demands will only continue to grow.

The Next Generation of Data Movement and Storage

At Marvell, we’re focused on helping our customers move and store data securely, reliably and efficiently as we transform data movement and storage across a range markets from the consumer to the cloud. With the staggering amount of data the world creates and moves every day, it’s hard to believe the humble beginnings of the technology we now take for granted.

What data demands will our future devices be tasked to support? Tweet us at @marvellsemi and let us know what you think!

-

October 13, 2016

Marvell Unveils Industry’s First 25G PHY Transceiver Fully Compliant to IEEE 802.3by 25GbE Specification

By Venu Balasubramonian, Marketing Director, Connectivity, Storage and Infrastructure Business Unit, Marvell

Alaska C 88X5123 enables adoption of 25G Ethernet in datacenters and enterprise networks

Growing demand for networking bandwidth is one of the biggest pain points facing datacenters today. To keep up with increased bandwidth needs, datacenters are transitioning from 10G to 25G Ethernet (GbE). To enable this, IEEE developed the 802.3by specifications defining Ethernet operation at 25Gbps, which was ratified recently. We are excited to introduce the high performance Marvell Alaska C 88X5123 Ethernet transceiver, the industry’s first PHY transceiver fully compliant to the new IEEE 25GbE specification.

Availability of standards-compliant equipment is critical for the growth and widespread adoption of 25GbE. By delivering the industry’s first PHY device fully compliant to the IEEE 802.3by 25GbE specification, we are enabling our customers to address the 25GbE market by developing products and applications that meet this newly defined specification.

In addition to supporting the IEEE 802.3by 25GbE specification, our 88X5123 is also fully compliant to the IEEE 802.3bj 100GbE specification and the 25/50G Ethernet Consortium specification. The device is packaged in a small 17mm x 17mm package, and supports 8 ports of 25GbE, four ports of 50GbE or two ports of 100GbE operation. The device also supports gearboxing functionality to enable high density 40G Ethernet solutions, on switch ASICs with native 25G I/Os.

With support for long reach (LR) SerDes, and integrated forward error correction (FEC) capability, the 88X5123 supports a variety of media types including single mode and multi-mode optical modules, passive and active copper direct attach cables, and copper backplanes. The device offers a fully symmetric architecture with LR SerDes and FEC capability on host and line interfaces, giving customers the flexibility for their system designs.

For more information on Marvell’s Alaska C 88X5123 Ethernet transceiver, please visit: http://www.marvell.com/transceivers/alaska-c-gbe/

-

October 06, 2016

Marvell PHYs for Low-Latency Industrial Ethernet

By Kaushik Mittra, Senior Product Marketing Manager, Ethernet PHY products, Connectivity, Storage and Infrastructure Business Unit, Marvell

Part 1 of Two-Part Series

Introducing the Marvell 88E1510P/1512P/1510Q Family of PHY Products

Traditionally Ethernet has been used in enterprise applications – we are familiar with its use in our office environments. But the IEEE 802.3 family of standards is constantly evolving. Industrial networks present their own set of challenges, and Ethernet with its components are evolving again to address the needs of the factory floor. Connectivity hardware that can offer low-latency, enhanced electrostatic discharge (ESD) protection while operating in extended temperature environments is invaluable to industrial network implementation. The Marvell 88E1510P/88E1512P/88E1510Q family of PHY (physical layer device) products was designed from the ground up in collaboration with leaders in industrial automation and has been vetted for use in the most demanding industrial applications.

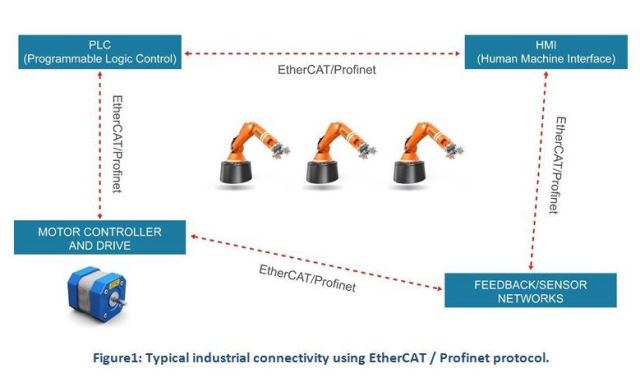

A multitude of communication protocols are used in industrial networks today including EtherNet/IP, EtherCAT, Profinet and SERCOS III. These are independent and proprietary offerings from different vendors. But what they share in common is the goal to deliver real-time Ethernet to industrial automation applications under harsh environmental conditions. The typical elements of an industrial network might include programmable logic controllers (PLCs), motor controllers and drives, sensor networks and human machine interfaces (HMIs). These elements are connected on the Ethernet backbone using a protocol such as EtherCAT or Profinet. The network topology might be hub-spoke (star) or linear. Regardless of network topology, the goal is to provide precise control and synchronized timing information to each of the nodes. If the topology is a long daisy chain, then each node has to perform with the most optimized latency to enable fast request/response cycle times through the system.

Looking for a Low-Latency PHY?

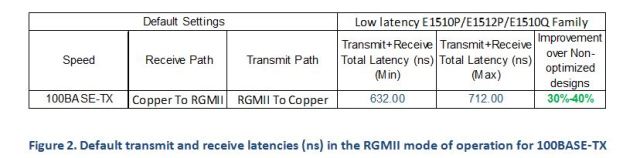

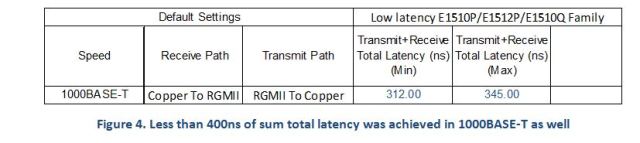

Protocols such as EtherCAT have to process the Ethernet packet and insert new data into the frame as it passes through in real-time. For real-time applications, this imposes tight restrictions on the latency through the switch and PHY. With this requirement in mind, we designed the Marvell 88E1510P/1512P/1510Q family of PHY products to address the stringent latency needs of tier-1 industrial customers. The table below shows that the Marvell low-latency PHY operates 30-40 percent faster as compared to non-optimized implementations.

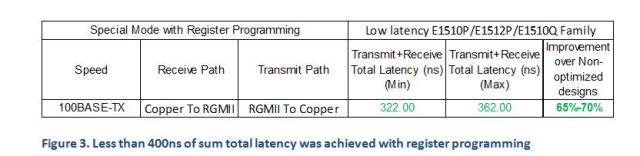

While the data shown above is the default configuration, significantly lower latencies are possible with register programming. The sum total of transmit and receive latency was less than 400ns across the entire range, as shown below.

While the data shown above is the default configuration, significantly lower latencies are possible with register programming. The sum total of transmit and receive latency was less than 400ns across the entire range, as shown below.  The small latency variation observed (from min to max) is due to the presence of synchronization circuits in the transmit path. Typically a FIFO (first in, first out queue) is used in the transmit path to compensate for any PPM (parts-per-million) differences between the transmit circuits and receiving circuits. Depending on packet size and the number of entries in the FIFO, a small variation in latency can be observed. (Note: When the PHY is used for precision-time protocol- (PTP-) based timestamping applications, the presence of the FIFO does not affect the accuracy of the timestamps. The timestamps are taken closest to the wire, eliminating the FIFO uncertainty).

The small latency variation observed (from min to max) is due to the presence of synchronization circuits in the transmit path. Typically a FIFO (first in, first out queue) is used in the transmit path to compensate for any PPM (parts-per-million) differences between the transmit circuits and receiving circuits. Depending on packet size and the number of entries in the FIFO, a small variation in latency can be observed. (Note: When the PHY is used for precision-time protocol- (PTP-) based timestamping applications, the presence of the FIFO does not affect the accuracy of the timestamps. The timestamps are taken closest to the wire, eliminating the FIFO uncertainty).Future Proof with 1000BASE-T

While 100BASE-TX speeds are sufficient for the majority of factory applications today, there is a growing need to support 1000BASE-T. Since the installation of industrial equipment and networks is capital-intensive, it is prudent to use a PHY device that can future-proof network speed requirements up to 1000BASE-T. The Marvell 88E1510P/1512P/1510Q family of PHY products supports 10BASE-T, 100BASE-TX, and 1000BASE-T. The low latency ranges observed in 1000BASE-T mode is shown below.

Extended Temperature Operation and ESD support

In an industrial environment, it is difficult to control temperatures on the plant floor, where surrounding equipment may operate at high temperatures and where it can be difficult to provide good ventilation. Industrial motors and robots connected by an Ethernet network often have to weld metals at very high temperatures.