By Mary Gorges, Talent Brand Content Manager, Marvell

“My advice for high schoolers is to build a strong foundation in math and physics. If computer science courses are available, take them.”

Marvell has always been at the forefront of innovation, and this summer, we welcomed a groundbreaking addition to our team: Ejaz Ahamed Shaik, our first-ever GenAI intern. He’s among more than 400 global interns at Marvell this summer.

Many of us use GenAI but what does someone do whose job title is GenAI? And how did Ejaz prepare for his internship when GenAI arrived on the scene less than two years ago?

Let’s go ask him.

Q: First, how does it feel to be Marvell's first GenAI intern?

Ejaz: It's an incredible opportunity. The job market for software development engineers is now very competitive, especially with advancements in AI. Being at Marvell, where there's a strong focus on innovation, allows me to work on cutting-edge projects and contribute to the future of technology. I do get a lot of attention. There’s a general curiosity among others about what I do.

Q: What are you currently working on at Marvell?

Ejaz: My main focus is developing a retrieval-augmented generation (RAG) system to assist developers with Verilog queries and design issues. (What’s that mean?) This system aims to detect early anomalies in the design process of a chip, which can be costly if not identified before verification. By integrating advanced large language model (LLM) tools, I'm creating a solution that provides accurate and timely insights to developers, streamlining the design and verification process. We're also working on developing a custom large language model specifically for addressing Verilog design and verification issues.

This article is the final installment in a series of talks delivered Accelerated Infrastructure for the AI Era, a one-day symposium held by Marvell in April 2024.

AI demands are pushing the limits of semiconductor technology, and hyperscale operators are at the forefront of adoption—they develop and deploy leading-edge technology that increases compute capacity. These large operators seek to optimize performance while simultaneously lowering total cost of ownership (TCO). With billions of dollars on the line, many have turned to custom silicon to meet their TCO and compute performance objectives.

But building a custom compute solution is no small matter. Doing so requires a large IP portfolio, significant R&D scale and decades of experience to create the mix of ingredients that make up custom AI silicon. Today, Marvell is partnering with hyperscale operators to deliver custom compute silicon that’s enabling their AI growth trajectories.

Why are hyperscale operators turning to custom compute?

Hyperscale operators have always been focused on maximizing both performance and efficiency, but new demands from AI applications have amplified the pressure. According to Raghib Hussain, president of products and technologies at Marvell, “Every hyperscaler is focused on optimizing every aspect of their platform because the order of magnitude of impact is much, much higher than before. They are not only achieving the highest performance, but also saving billions of dollars.”

With multiple business models in the cloud, including internal apps, infrastructure-as-a-service (IaaS), and software-as-a-service (SaaS)—the latter of which is the fastest-growing market thanks to generative AI—hyperscale operators are constantly seeking ways to improve their total cost of ownership. Custom compute allows them to do just that. Operators are first adopting custom compute platforms for their mass-scale internal applications, such as search and their own SaaS applications. Next up for greater custom adoption will be third-party SaaS and IaaS, where the operator offers their own custom compute as an alternative to merchant options.

![]()

Progression of custom silicon adoption in hyperscale data centers.

By Mary Gorges, Talent Brand Content Manager, Marvell

Marvell principal hardware engineer Simon Xu regularly goes from circuits to the courts. In his time outside of the semiconductor company, he's a mentor to his twin daughters, Annie and Kerry.

And not just any mentor. This July, the 24-year-old twins will be heading to the Paris Olympics to compete side-by-side in women’s doubles badminton. This is backyard badminton taken to a whole other level. “Badminton has the fastest flying objects in Olympic sports,” says Annie. “The bird can go more than 300 miles an hour over the net. For this level of play, you need extremely good body coordination, fast reflexes, lots of stamina and strong arms.”

By Michael Kanellos, Head of Influencer Relations, Marvell

Aaron Thean, points to a slide featuring the downtown skylines of New York, Singapore and San Francisco along with a prototype of a 3D processor and asks, “Which one of these things is not like the other?”

The answer? While most gravitate to the processor, San Francisco is a better answer. With a population well under 1 million, the city’s internal transportation and communications systems don’t come close to the level of complexity, performance and synchronization required by the other three.

With future chips, “we’re talking about trillions of transistors on multiple substrates,” said Thean, the deputy president of the National University of Singapore and the director of SHINE, an initiative to expand Singapore’s role in the development of chipets, during a one-day summit sponsored by Marvell and the university.

This article is part five in a series on talks delivered at Accelerated Infrastructure for the AI Era, a one-day symposium held by Marvell in April 2024.

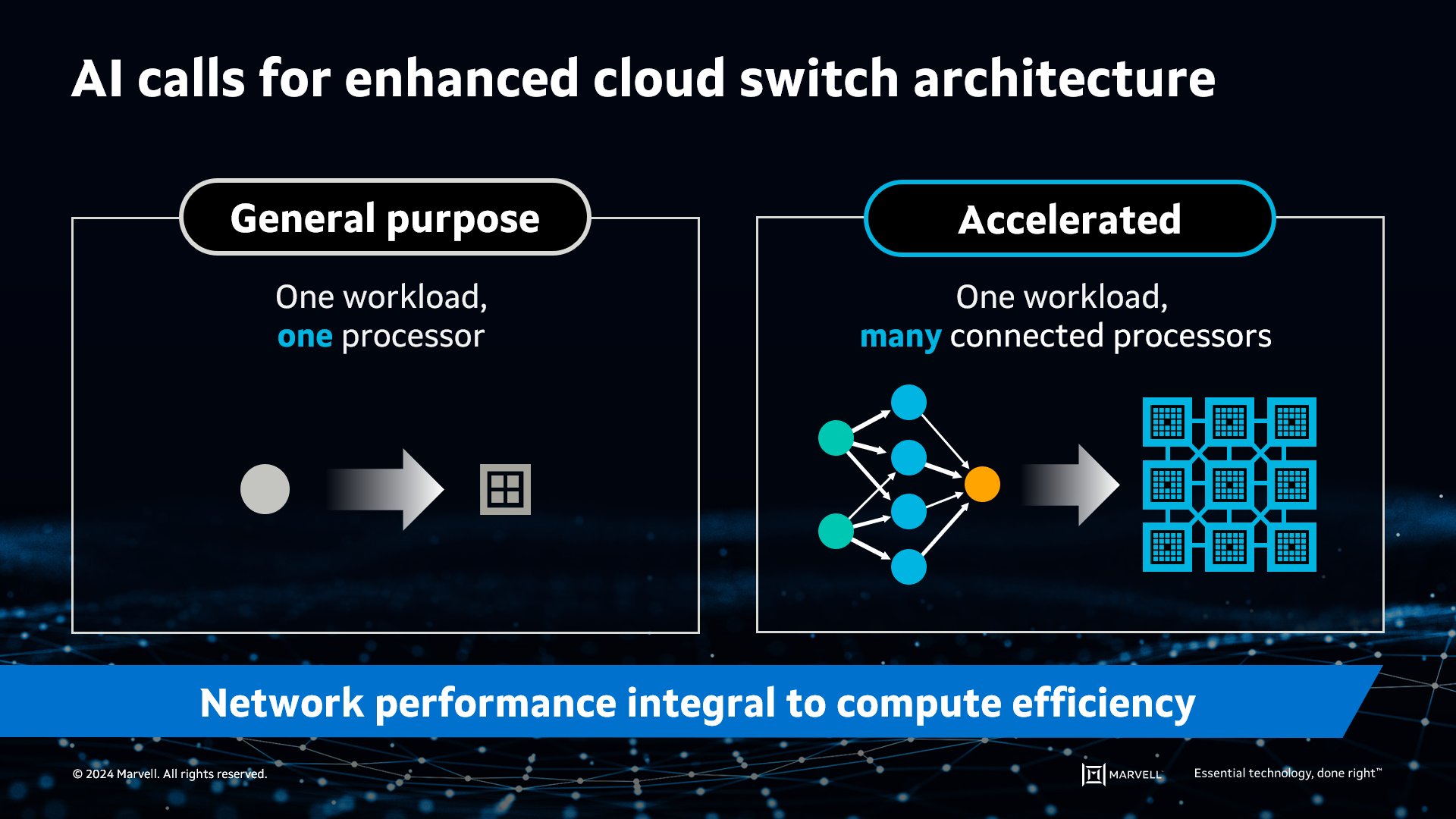

AI has fundamentally changed the network switching landscape. AI requirements are driving foundational shifts in the industry roadmap, expanding the use cases for cloud switching semiconductors and creating opportunities to redefine the terrain.

Here’s how AI will drive cloud switching innovation.

A changing network requires a change in scale

In a modern cloud data center, the compute servers are connected to themselves and the internet through a network of high-bandwidth switches. The approach is like that of the internet itself, allowing operators to build a network of any size while mixing and matching products from various vendors to create a network architecture specific to their needs.

Such a high-bandwidth switching network is critical for AI applications, and a higher-performing network can lead to a more profitable deployment.

However, expanding and extending the general-purpose cloud network to AI isn’t quite as simple as just adding more building blocks. In the world of general-purpose computing, a single workload or more can fit on a single server CPU. In contrast, AI’s large datasets don’t fit on a single processor, whether it’s a CPU, GPU or other accelerated compute device (XPU), making it necessary to distribute the workload across multiple processors. These accelerated processors must function as a single computing element.

AI requires accelerated infrastructure to split workloads across many processors.