If you’re one of the 100+ million monthly users of ChatGPT—or have dabbled with Google’s Bard or Microsoft’s Bing AI—you’re proof that AI has entered the mainstream consumer market.

And what’s entered the consumer mass-market will inevitably make its way to the enterprise, an even larger market for AI. There are hundreds of generative AI startups racing to make it so. And those responsible for making these AI tools accessible—cloud data center operators—are investing heavily to keep up with current and anticipated demand.

Of course, it’s not just the latest AI language models driving the coming infrastructure upgrade cycle. Operators will pay equal attention to improving general purpose cloud infrastructure too, as well as take steps to further automate and simplify operations.

To help operators meet their scaling and efficiency objectives, today Marvell introduces Teralynx® 10, a 51.2 Tbps programmable 5nm monolithic switch chip designed to address the operator bandwidth explosion while meeting stringent power- and cost-per-bit requirements. It’s intended for leaf and spine applications in next-generation data center networks, as well as AI/ML and high-performance computing (HPC) fabrics.

A single Teralynx 10 replaces twelve of the 12.8 Tbps generation, the last to see widespread deployment. The resulting savings are impressive: 80% power reduction for equivalent capacity.

As I mentioned in the previous post, AI/ML, HPC and other distributed applications need more than just bandwidth for optimal performance. They need ultra-low, deterministic latency to minimize time-in-networking and to unclog the network bottlenecks that delay job completion and can reduce cloud service revenues.

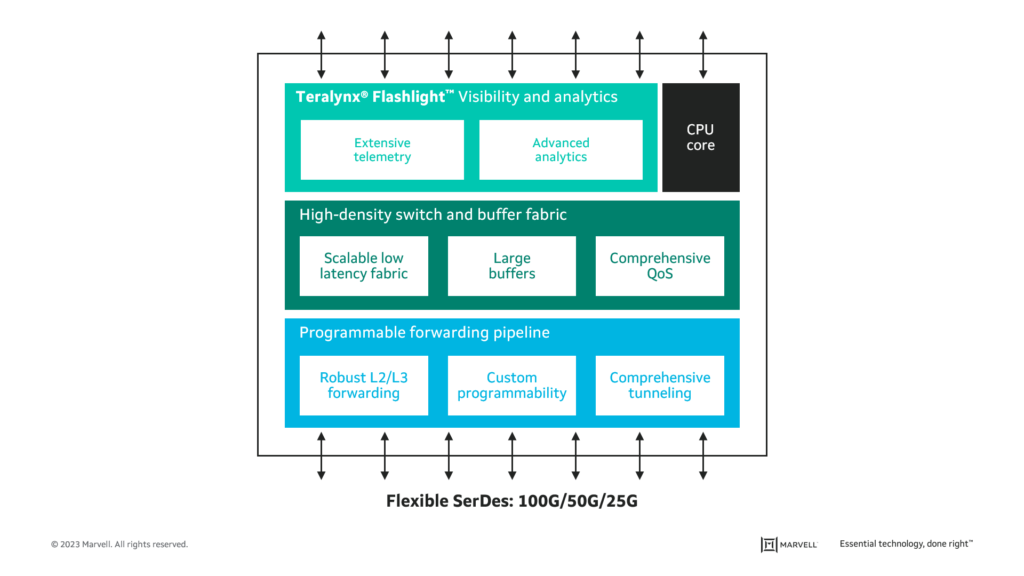

The Teralynx architecture was purpose-built to address just such a challenge. I say “architecture” because the Teralynx 10 is the latest chip to make use of a common, unique-to-Teralynx, ultra-low latency switch and buffer fabric—the lowest of any programmable switch. On top of low latency, Teralynx 10 supports congestion-aware routing and real-time streaming telemetry that enables the network to auto-tune and self-heal. With line-rate programmability, new protocols and features can be added to address evolving requirements for AI/ML.

Here’s a quick feature and benefits run-down:

Proven, robust 112G SerDes

Teralynx 10 features 512 long-reach (LR) 112G SerDes. And not just any SerDes—this high-performance SerDes is found throughout the Marvell portfolio and sports the industry’s lowest bit error rate (BER).With it, switch system vendors can develop a wide range of switch configurations such as 32 x 1.6T, 64 x 800G, and 128 x 400G, giving them the flexibility to address the unique needs of each operator.

Programmable forwarding

Teralynx 10 offers a comprehensive data center feature set, including IP forwarding, tunneling, rich QoS and robust RDMA. It also offers permutable flex-forwarding to enable operators to program new packet forwarding protocols as network requirements evolve, with no compromise to throughput, latency or power.

Ultra-low-latency fabric

Teralynx 10 is built on the next generation of the lowest latency fabric architecture in a programmable switch. That low latency helps to unclog network bottlenecks, minimizes time spent in networking, and shortens job completion time for distributed, demanding applications such as high-performance computing and machine learning training. This helps operators maximize the utilization of their compute resources—and the revenue associated with those resources.

Teralynx® Flashlight™ advanced telemetry

Teralynx 10 supports extensive real-time network telemetry, including P4 in-band network telemetry (INT).These capabilities enable predictive analytics, faster issue resolution and a higher degree of automation, all to reduce OPEX and increase network uptime.

Teralynx software

Teralynx 10 is fully software compatible with Teralynx 7, minimizing time and cost to transition for existing customers. The software toolkit, which includes an SDK and SAI for ease of NOS porting, is robust, high-performance and portable.

Teralynx 10 in a nutshell

Teralynx 10 will sample in Q2. We look forward to continuing our customer engagements and sharing more information as we sample the product.

Stay tuned and see you at OFC!

Tags: 112G SerDes, 512 long-reach, ai ml, Enterprise networking, Switch, teralynx

Copyright © 2026 Marvell, All rights reserved.